Numerical differentiation

In-game article clicks load inline without leaving the challenge.

In numerical analysis, numerical differentiation algorithms estimate the derivative of a mathematical function or subroutine using values of the function. Unlike analytical differentiation, which provides exact expressions for derivatives, numerical differentiation relies on the function's values at a set of discrete points to estimate the derivative's value at those points or at intermediate points. This approach is particularly useful when dealing with data obtained from experiments, simulations, or situations where the function is defined only at specific intervals.

Finite differences

The simplest method is to use finite difference approximations.

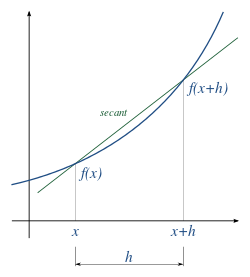

A simple two-point estimation is to compute the slope of a nearby secant line through the points (x, f(x)) and (x + h, f(x + h)). Choosing a small number h, h represents a small change in x, and it can be either positive or negative. The slope of this line is f ( x + h ) − f ( x ) h . {\displaystyle {\frac {f(x+h)-f(x)}{h}}.} This expression is Newton's difference quotient (also known as a first-order divided difference).

To obtain an error estimate for this approximation, one can use Taylor expansion of f ( x ) {\displaystyle f(x)} about the base point x {\displaystyle x} to give f ( x + h ) = f ( x ) + h f ′ ( x ) + h 2 2 f ″ ( c ) {\displaystyle f(x+h)=f(x)+hf'(x)+{\frac {h^{2}}{2}}f''(c)} for some c {\displaystyle c} between x {\displaystyle x} and x + h {\displaystyle x+h}. Rearranging gives f ′ ( x ) = f ( x + h ) − f ( x ) h ⏟ Slope of secant line − h 2 f ″ ( c ) ⏟ Error term . {\displaystyle f'(x)=\underbrace {\frac {f(x+h)-f(x)}{h}} _{\text{Slope of secant line}}-\underbrace {{\frac {h}{2}}f''(c)} _{\text{Error term}}.} The slope of this secant line differs from the slope of the tangent line by an amount that is approximately proportional to h. As h approaches zero, the slope of the secant line approaches the slope of the tangent line and the error term vanishes. Therefore, the true derivative of f at x is the limit of the value of the difference quotient as the secant lines get closer and closer to being a tangent line: f ′ ( x ) = lim h → 0 f ( x + h ) − f ( x ) h . {\displaystyle f'(x)=\lim _{h\to 0}{\frac {f(x+h)-f(x)}{h}}.}

Since immediately substituting 0 for h results in 0 0 {\displaystyle {\frac {0}{0}}} indeterminate form, calculating the derivative directly can be unintuitive.

Equivalently, the slope could be estimated by employing positions x − h and x.

Another two-point formula is to compute the slope of a nearby secant line through the points (x − h, f(x − h)) and (x + h, f(x + h)). The slope of this line is f ( x + h ) − f ( x − h ) 2 h . {\displaystyle {\frac {f(x+h)-f(x-h)}{2h}}.}

This formula is known as the symmetric difference quotient. In this case the first-order errors cancel, so the slope of these secant lines differ from the slope of the tangent line by an amount that is approximately proportional to h 2 {\displaystyle h^{2}}. Hence for small values of h this is a more accurate approximation to the tangent line than the one-sided estimation. However, although the slope is being computed at x, the value of the function at x is not involved.

The estimation error is given by R = − f ( 3 ) ( c ) 6 h 2 , {\displaystyle R={\frac {-f^{(3)}(c)}{6}}h^{2},} where c {\displaystyle c} is some point between x − h {\displaystyle x-h} and x + h {\displaystyle x+h}. This error does not include the rounding error due to numbers being represented and calculations being performed in limited precision.

The symmetric difference quotient is employed as the method of approximating the derivative in a number of calculators, including TI-82, TI-83, TI-84, TI-85, all of which use this method with h = 0.001.

Step size

An important consideration in practice when the function is calculated using floating-point arithmetic of finite precision is the choice of step size, h. To illustrate, consider the two-point approximation formula with error term:

f ′ ( x ) = f ( x + h ) − f ( x − h ) 2 h − f ( 3 ) ( c ) 6 h 2 {\displaystyle f'(x)={\frac {f(x+h)-f(x-h)}{2h}}-{\frac {f^{(3)}(c)}{6}}h^{2}}

where c {\displaystyle c} is some point between x − h {\displaystyle x-h} and x + h {\displaystyle x+h}. Let e ( x ) {\displaystyle e(x)} denote the roundoff error encountered when evaluating the function f ( x ) {\displaystyle f(x)} and f ^ ( x ) {\displaystyle {\hat {f}}(x)} denote the computed value of f ( x ) {\displaystyle f(x)}. Therefore, f ( x ) = f ^ ( x ) + e ( x ) . {\displaystyle f(x)={\hat {f}}(x)+e(x).} The total error in the approximation is f ′ ( x ) − f ^ ( x + h ) − f ^ ( x − h ) 2 h = e ( x + h ) − e ( x − h ) 2 h ⏟ Roundoff error − f ( 3 ) ( c ) 6 h 2 ⏟ Truncation error . {\displaystyle f'(x)-{\frac {{\hat {f}}(x+h)-{\hat {f}}(x-h)}{2h}}=\underbrace {\frac {e(x+h)-e(x-h)}{2h}} _{\text{Roundoff error}}-\underbrace {{\frac {f^{(3)}(c)}{6}}h^{2}} _{\text{Truncation error}}.} Assuming that the roundoff errors are bounded by some number ϵ > 0 {\displaystyle \epsilon >0} and the third derivative of f ( x ) {\displaystyle f(x)} is bounded by some number M > 0 {\displaystyle M>0}, we get | f ′ ( x ) − f ^ ( x + h ) − f ^ ( x − h ) 2 h | ≤ ϵ h + h 2 6 M . {\displaystyle \left|f'(x)-{\frac {{\hat {f}}(x+h)-{\hat {f}}(x-h)}{2h}}\right|\leq {\frac {\epsilon }{h}}+{\frac {h^{2}}{6}}M.}

To reduce the truncation error h 2 6 M {\displaystyle {\frac {h^{2}}{6}}M}, we must reduce h. But as h is reduced, the roundoff error ϵ h {\displaystyle {\frac {\epsilon }{h}}} increases. Due to the need to divide by the small value h, all the finite-difference formulae for numerical differentiation are similarly ill-conditioned.

If instead the derivative is approximated using f ′ ( x ) = f ( x + h ) − f ( x ) h {\displaystyle f'(x)={\frac {f(x+h)-f(x)}{h}}} and we assume the relative approximation error due to rounding is bounded by machine epsilon and the values | f ″ ( x ) | {\displaystyle |f''(x)|} and | f ( x ) | {\displaystyle |f(x)|} are bounded on [ x 0 , x 0 + h ] {\displaystyle [x_{0},x_{0}+h]} by M 1 {\displaystyle M_{1}}and M 2 {\displaystyle M_{2}} respectively it can be shown that:| f ′ ( x 0 ) − f ^ ( x 0 + h ) − f ^ ( x 0 ) h | ≤ h 2 M 1 + 2 ϵ h M 2 {\displaystyle \left|f'(x_{0})-{\frac {{\hat {f}}(x_{0}+h)-{\hat {f}}(x_{0})}{h}}\right|\leq {\frac {h}{2}}M_{1}+{\frac {2\epsilon }{h}}M_{2}}By minimizing this upper bound an estimate for the optimal step size can be obtained. However, employing this method would require the knowledge of the bounds M 1 {\displaystyle M_{1}} and M 2 {\displaystyle M_{2}}. Instead, if an approximate upper bound is used - one where M 1 {\displaystyle M_{1}}and M 2 {\displaystyle M_{2}} are replaced by | f ″ ( x 0 ) | {\displaystyle |f''(x_{0})|} and | f ( x 0 ) | {\displaystyle |f(x_{0})|} the error can be estimated as:e ( h ) = h 2 | f ″ ( x 0 ) | + 2 ϵ h | f ( x 0 ) | {\displaystyle e(h)={\frac {h}{2}}|f''(x_{0})|+{\frac {2\epsilon }{h}}|f(x_{0})|}By minimizing e ( h ) {\displaystyle e(h)} an estimate for the optimal step size h can be found: h = 2 ε | f ( x ) f ″ ( x ) | {\displaystyle h=2{\sqrt {\varepsilon \left|{\frac {f(x)}{f''(x)}}\right|}}}(though not when f ″ ( x ) = 0 {\displaystyle f''(x)=0}). If no information about the function f {\displaystyle f} is known, sometimes the approximation | f ( x ) f ″ ( x ) | ≈ x {\displaystyle {\sqrt {\left|{\frac {f(x)}{f''(x)}}\right|}}\approx x} is used giving a choice for h that is small without producing a large rounding error: ε x {\displaystyle {\sqrt {\varepsilon }}x}, where the machine epsilon ε is typically of the order of 2.2×10−16 for double precision. In practice, one cannot compute the value that minimizes the above error bounds and therefore cannot compute an approximation of the optimal step size without information about higher-order derivatives.

Example

To demonstrate this difficulty, consider approximating the derivative of the function f ( x ) = 2 x 1 + x {\displaystyle f(x)={\frac {2x}{1+{\sqrt {x}}}}} at the point x 0 = 9 {\displaystyle x_{0}=9}. In this case, we can calculate f ′ ( x ) = 2 + x ( 1 + x ) 2 {\displaystyle f'(x)={\frac {2+{\sqrt {x}}}{(1+{\sqrt {x}})^{2}}}} which gives f ′ ( 9 ) = 2 + 9 ( 1 + 9 ) 2 = 2 + 3 ( 1 + 3 ) 2 = 5 16 = 0.3125. {\displaystyle f'(9)={\frac {2+{\sqrt {9}}}{(1+{\sqrt {9}})^{2}}}={\frac {2+3}{(1+3)^{2}}}={\frac {5}{16}}=0.3125.} Using 64-bit floating point numbers, the following approximations are generated with the two-point approximation formula and increasingly smaller step sizes. The smallest absolute error is produced for a step size of 10 − 4 {\displaystyle 10^{-4}}, after which the absolute error steadily increases as the roundoff errors dominate calcuations.

| Step Size (h) | Approximation | Absolute Error |

|---|---|---|

| 10 − 1 {\displaystyle 10^{-1}} | 0.31250397877426117 {\displaystyle 0.31250397877426117} | 3.97877 × 10 − 6 {\displaystyle 3.97877\times 10^{-6}} |

| 10 − 2 {\displaystyle 10^{-2}} | 0.31250003978589014 {\displaystyle 0.31250003978589014} | 3.97858 × 10 − 8 {\displaystyle 3.97858\times 10^{-8}} |

| 10 − 3 {\displaystyle 10^{-3}} | 0.3125000003976197 {\displaystyle 0.3125000003976197} | 3.97619 × 10 − 10 {\displaystyle 3.97619\times 10^{-10}} |

| 10 − 4 {\displaystyle 10^{-4}} | 0.31250000000593303 {\displaystyle 0.31250000000593303} | 5.93303 × 10 − 12 {\displaystyle 5.93303\times 10^{-12}} |

| 10 − 5 {\displaystyle 10^{-5}} | 0.3124999999215561 {\displaystyle 0.3124999999215561} | 7.84439 × 10 − 11 {\displaystyle 7.84439\times 10^{-11}} |

| 10 − 6 {\displaystyle 10^{-6}} | 0.31249999965510256 {\displaystyle 0.31249999965510256} | 3.44897 × 10 − 10 {\displaystyle 3.44897\times 10^{-10}} |

| 10 − 7 {\displaystyle 10^{-7}} | 0.31249999921101335 {\displaystyle 0.31249999921101335} | 7.88986 × 10 − 10 {\displaystyle 7.88986\times 10^{-10}} |

| 10 − 8 {\displaystyle 10^{-8}} | 0.31250002585636594 {\displaystyle 0.31250002585636594} | 2.58563 × 10 − 8 {\displaystyle 2.58563\times 10^{-8}} |

| 10 − 9 {\displaystyle 10^{-9}} | 0.31250024790097086 {\displaystyle 0.31250024790097086} | 2.47900 × 10 − 7 {\displaystyle 2.47900\times 10^{-7}} |

| 10 − 10 {\displaystyle 10^{-10}} | 0.31249669518729206 {\displaystyle 0.31249669518729206} | 3.30481 × 10 − 6 {\displaystyle 3.30481\times 10^{-6}} |

| 10 − 11 {\displaystyle 10^{-11}} | 0.31246116805050406 {\displaystyle 0.31246116805050406} | 3.88319 × 10 − 5 {\displaystyle 3.88319\times 10^{-5}} |

| 10 − 12 {\displaystyle 10^{-12}} | 0.312194714524594 {\displaystyle 0.312194714524594} | 3.05285 × 10 − 4 {\displaystyle 3.05285\times 10^{-4}} |

| 10 − 13 {\displaystyle 10^{-13}} | 0.31530333899354446 {\displaystyle 0.31530333899354446} | 2.80333 × 10 − 3 {\displaystyle 2.80333\times 10^{-3}} |

| 10 − 14 {\displaystyle 10^{-14}} | 3.552713678800501 {\displaystyle 3.552713678800501} | 4.27713 × 10 − 2 {\displaystyle 4.27713\times 10^{-2}} |

For computer calculations the problems are exacerbated because, although x necessarily holds a representable floating-point number in some precision (32 or 64-bit, etc.), x + h almost certainly will not be exactly representable in that precision. This means that x + h will be changed (by rounding or truncation) to a nearby machine-representable number, with the consequence that (x + h) − x will not equal h; the two function evaluations will not be exactly h apart. In this regard, since most decimal fractions are recurring sequences in binary (just as 1/3 is in decimal) a seemingly round step such as h = 0.1 will not be a round number in binary; it is 0.000110011001100...2 A possible approach is as follows:

However, with computers, compiler optimization facilities may fail to attend to the details of actual computer arithmetic and instead apply the axioms of mathematics to deduce that dx and h are the same. With C and similar languages, a directive that xph is a volatile variable will prevent this.

Three Point methods

To obtain more general derivative approximation formulas for some function f ( x ) {\displaystyle f(x)}, let h > 0 {\displaystyle h>0} be a positive number close to zero. The Taylor expansion of f ( x ) {\displaystyle f(x)} about the base point x {\displaystyle x} is

| f ( x + h ) = f ( x ) + h f ′ ( x ) + h 2 2 ! f ″ ( x ) + h 3 3 ! f ‴ ( x ) + . . . {\displaystyle f(x+h)=f(x)+hf'(x)+{\frac {h^{2}}{2!}}f''(x)+{\frac {h^{3}}{3!}}f'''(x)+...} |

Replacing h {\displaystyle h} by 2 h {\displaystyle 2h} gives

| f ( x + 2 h ) = f ( x ) + 2 h f ′ ( x ) + 4 h 2 2 ! f ″ ( x ) + 8 h 3 3 ! f ‴ ( x ) + . . . {\displaystyle f(x+2h)=f(x)+2hf'(x)+{\frac {4h^{2}}{2!}}f''(x)+{\frac {8h^{3}}{3!}}f'''(x)+...} |

Multiplying identity (1) by 4 gives

| 4 f ( x + h ) = 4 f ( x ) + 4 h f ′ ( x ) + 4 h 2 2 ! f ″ ( x ) + 4 h 3 3 ! f ‴ ( x ) + . . . {\displaystyle 4f(x+h)=4f(x)+4hf'(x)+{\frac {4h^{2}}{2!}}f''(x)+{\frac {4h^{3}}{3!}}f'''(x)+...} |

Subtracting identity (1') from (2) eliminates the h 2 {\displaystyle h^{2}} term:

f ( x + 2 h ) − 4 f ( x + h ) = − 3 f ( x ) − 2 h f ′ ( x ) + 4 h 3 3 ! f ‴ ( x ) + . . . {\displaystyle f(x+2h)-4f(x+h)=-3f(x)-2hf'(x)+{\frac {4h^{3}}{3!}}f'''(x)+...}

which can be written as

f ( x + 2 h ) − 4 f ( x + h ) = − 3 f ( x ) − 2 h f ′ ( x ) + O ( h 3 ) . {\displaystyle f(x+2h)-4f(x+h)=-3f(x)-2hf'(x)+O(h^{3}).}

Rearranging terms gives f ′ ( x ) = − 3 f ( x ) + 4 f ( x + h ) − f ( x + 2 h ) 2 h + O ( h 2 ) , {\displaystyle f'(x)={\frac {-3f(x)+4f(x+h)-f(x+2h)}{2h}}+O(h^{2}),}

which is called the three-point forward difference formula for the derivative. Using a similar approach, one can show

f ′ ( x ) = f ( x + h ) − f ( x − h ) 2 h + O ( h 2 ) {\displaystyle f'(x)={\frac {f(x+h)-f(x-h)}{2h}}+O(h^{2})} which is called the three-point central difference formula, and f ′ ( x ) = f ( x − 2 h ) − 4 f ( x − h ) + 3 f ( x ) 2 h + O ( h 2 ) {\displaystyle f'(x)={\frac {f(x-2h)-4f(x-h)+3f(x)}{2h}}+O(h^{2})} which is called the three-point backward difference formula.

By a similar approach, the five point midpoint approximation formula can be derived as: f ′ ( x ) = − f ( x + 2 h ) + 8 f ( x + h ) − 8 f ( x − h ) + f ( x − 2 h ) 12 h + O ( h 4 ) . {\displaystyle f'(x)={\frac {-f(x+2h)+8f(x+h)-8f(x-h)+f(x-2h)}{12h}}+O(h^{4}).}

Numerical Example

Consider approximating the derivative of f ( x ) = x sin x {\displaystyle f(x)=x\sin {x}} at the point x 0 = π 4 {\displaystyle x_{0}={\frac {\pi }{4}}}. Since f ′ ( x ) = sin x + x cos x {\displaystyle f'(x)=\sin {x}+x\cos {x}}, the exact value is f ′ ( π 4 ) = sin π 4 + π 4 cos π 4 = 1 2 + π 4 2 ≈ 1.2624671484563432. {\displaystyle f'({\frac {\pi }{4}})=\sin {\frac {\pi }{4}}+{\frac {\pi }{4}}\cos {\frac {\pi }{4}}={\frac {1}{\sqrt {2}}}+{\frac {\pi }{4{\sqrt {2}}}}\approx 1.2624671484563432.}

| Formula | Step Size (h) | Approximation | Absolute Error |

|---|---|---|---|

| Three-point forward difference formula | 10 − 1 {\displaystyle 10^{-1}} | 1.2719084899816118 {\displaystyle 1.2719084899816118} | 9.441 × 10 − 3 {\displaystyle 9.441\times 10^{-3}} |

| 10 − 2 {\displaystyle 10^{-2}} | 1.2625569346253918 {\displaystyle 1.2625569346253918} | 8.978 × 10 − 5 {\displaystyle 8.978\times 10^{-5}} | |

| 10 − 3 {\displaystyle 10^{-3}} | 1.2624680412510747 {\displaystyle 1.2624680412510747} | 8.927 × 10 − 7 {\displaystyle 8.927\times 10^{-7}} | |

| 10 − 4 {\displaystyle 10^{-4}} | 1.2624671573796542 {\displaystyle 1.2624671573796542} | 8.923 × 10 − 9 {\displaystyle 8.923\times 10^{-9}} | |

| Three-point backward difference formula | 10 − 1 {\displaystyle 10^{-1}} | 1.2719084899816118 {\displaystyle 1.2719084899816118} | 8.307 × 10 − 3 {\displaystyle 8.307\times 10^{-3}} |

| 10 − 2 {\displaystyle 10^{-2}} | 1.2625569346253918 {\displaystyle 1.2625569346253918} | 8.864 × 10 − 5 {\displaystyle 8.864\times 10^{-5}} | |

| 10 − 3 {\displaystyle 10^{-3}} | 1.2624680412510747 {\displaystyle 1.2624680412510747} | 8.916 × 10 − 7 {\displaystyle 8.916\times 10^{-7}} | |

| 10 − 4 {\displaystyle 10^{-4}} | 1.2624671573796542 {\displaystyle 1.2624671573796542} | 8.922 × 10 − 9 {\displaystyle 8.922\times 10^{-9}} | |

| Three-point central difference formula | 10 − 1 {\displaystyle 10^{-1}} | 1.2719084899816118 {\displaystyle 1.2719084899816118} | 4.457 × 10 − 3 {\displaystyle 4.457\times 10^{-3}} |

| 10 − 2 {\displaystyle 10^{-2}} | 1.2625569346253918 {\displaystyle 1.2625569346253918} | 4.461 × 10 − 5 {\displaystyle 4.461\times 10^{-5}} | |

| 10 − 3 {\displaystyle 10^{-3}} | 1.2624680412510747 {\displaystyle 1.2624680412510747} | 4.461 × 10 − 7 {\displaystyle 4.461\times 10^{-7}} | |

| 10 − 4 {\displaystyle 10^{-4}} | 1.2624671573796542 {\displaystyle 1.2624671573796542} | 4.461 × 10 − 9 {\displaystyle 4.461\times 10^{-9}} |

Code

The following is an example of a Python implementation for finding derivatives numerically for f ( x ) = 2 x 1 + x {\displaystyle f(x)={\frac {2x}{1+{\sqrt {x}}}}} using the various three-point difference formulas at x 0 = 4 {\displaystyle x_{0}=4}. The function func has derivative func_prime.

| Example implementation in Python |

| importmath deffunc(x): return (2*x) / (1 + math.sqrt(x)) deffunc_prime(x): return (2 + math.sqrt(x)) / ((1 + math.sqrt(x))**2) defthree_point_forward(value, h): return ((-3/2) * func(value) + 2*func(value + h) - (1/2)*func(value + 2*h)) / h defthree_point_central(value, h): return ((-1/2)*func(value - h) + (1/2)*func(value + h)) / h defthree_point_backward(value, h): return ((1/2)*func(value - 2*h) - 2*func(value - h) + (3/2)*func(value)) / h value = 4 actual = func_prime(value) print("Actual value " + str(actual)) print("============================================") for step_size in [0.1, 0.01, 0.001, 0.0001]: print("Step size " + str(step_size)) forward = three_point_forward(value, step_size) backward = three_point_backward(value, step_size) central = three_point_central(value, step_size) print("Forward{:>12}, Error ={:>12}".format(str(forward), str(abs(forward - actual)))) print("Backward{:>12}, Error ={:>12}".format(str(forward), str(abs(backward - actual)))) print("Central{:>12}, Error ={:>12}".format(str(forward), str(abs(central - actual)))) print("============================================") |

| Output |

| Actual value 0.4444444444444444 ============================================ Step size 0.1 Forward 0.4443963018050967, Error = 4.814263934771468e-05 Backward 0.4443963018050967, Error = 2.5082646145202503e-05 Central 0.4443963018050967, Error = 5.231976394060034e-05 ============================================ Step size 0.01 Forward 0.4444439449793336, Error = 4.994651108258807e-07 Backward 0.4444439449793336, Error = 2.507721614808389e-07 Central 0.4444439449793336, Error = 5.036366184096863e-07 ============================================ Step size 0.001 Forward 0.4444444394311464, Error = 5.013297998957e-09 Backward 0.4444444394311464, Error = 2.507574814458735e-09 Central 0.4444444394311464, Error = 5.017960935660426e-09 ============================================ Step size 0.0001 Forward 0.4444444443896245, Error = 5.4819926376126205e-11 Backward 0.4444444443896245, Error = 2.5116131396885066e-11 Central 0.4444444443896245, Error = 5.037903427762558e-11 ============================================ |

Higher derivatives

Using Taylor Series, it is possible to derive formulas to approximate the second (and higher order) derivatives of a general function. For a function f ( x ) {\displaystyle f(x)} and some number h > 0 {\displaystyle h>0}, expanding it about x + h {\displaystyle x+h} and x − h {\displaystyle x-h} gives

f ( x + h ) = f ( x ) + f ′ ( x ) h + 1 2 f ″ ( x ) h 2 + 1 6 f ‴ ( x ) h 3 + 1 24 f ( 4 ) ( ξ 1 ) h 4 {\displaystyle f(x+h)=f(x)+f'(x)h+{\frac {1}{2}}f''(x)h^{2}+{\frac {1}{6}}f'''(x)h^{3}+{\frac {1}{24}}f^{(4)}(\xi _{1})h^{4}} and f ( x − h ) = f ( x ) − f ′ ( x ) h + 1 2 f ″ ( x ) h 2 − 1 6 f ‴ ( x ) h 3 + 1 24 f ( 4 ) ( ξ 2 ) h 4 {\displaystyle f(x-h)=f(x)-f'(x)h+{\frac {1}{2}}f''(x)h^{2}-{\frac {1}{6}}f'''(x)h^{3}+{\frac {1}{24}}f^{(4)}(\xi _{2})h^{4}} where x − h < ξ 2 < x < ξ 1 < x + h {\displaystyle x-h<\xi _{2}<x<\xi _{1}<x+h}. Adding these two equations gives

f ( x + h ) + f ( x − h ) = 2 f ( x ) + f ″ ( x ) h 2 + 1 24 [ f ( 4 ) ( ξ 1 ) + f ( 4 ) ( ξ 2 ) ] h 4 . {\displaystyle f(x+h)+f(x-h)=2f(x)+f''(x)h^{2}+{\frac {1}{24}}\left[f^{(4)}(\xi _{1})+f^{(4)}(\xi _{2})\right]h^{4}.}

If f ( 4 ) {\displaystyle f^{(4)}} is continuous on [ x − h , x + h ] {\displaystyle [x-h,x+h]}, then 1 2 [ f ( 4 ) ( ξ 1 ) + f ( 4 ) ( ξ 2 ) ] {\displaystyle {\frac {1}{2}}\left[f^{(4)}(\xi _{1})+f^{(4)}(\xi _{2})\right]} is between f ( 4 ) ( ξ 1 ) {\displaystyle f^{(4)}(\xi _{1})} and f ( 4 ) ( ξ 2 ) {\displaystyle f^{(4)}(\xi _{2})}. The Intermediate Value Theorem guarantees a number, say ξ {\displaystyle \xi }, between ξ 1 {\displaystyle \xi _{1}} and ξ 2 {\displaystyle \xi _{2}}. We can therefore write

f ″ ( x ) = 1 h 2 [ f ( x − h ) − 2 f ( x ) + f ( x + h ) ] − h 2 12 f ( 4 ) ( ξ ) . {\displaystyle f''(x)={\frac {1}{h^{2}}}\left[f(x-h)-2f(x)+f(x+h)\right]-{\frac {h^{2}}{12}}f^{(4)}(\xi ).}

where x − h < ξ < x + h {\displaystyle x-h<\xi <x+h}.

Numerical Example

Consider approximating the second derivative of the function f ( x ) = e x sin x {\displaystyle f(x)=e^{x}\sin {x}} at the point x 0 = π 4 {\displaystyle x_{0}={\frac {\pi }{4}}}.

Since f ″ ( x ) = 2 e x cos x {\displaystyle f''(x)=2e^{x}\cos {x}}, the exact value is f ″ ( π 4 ) = 2 e π 4 ≈ 3.1017663 {\displaystyle f''({\frac {\pi }{4}})={\sqrt {2}}e^{\frac {\pi }{4}}\approx 3.1017663}.

| Step Size (h) | Approximation | Absolute Error |

|---|---|---|

| 10 − 1 {\displaystyle 10^{-1}} | 3.096593338003672 {\displaystyle 3.096593338003672} | 5.17305 × 10 − 3 {\displaystyle 5.17305\times 10^{-3}} |

| 10 − 2 {\displaystyle 10^{-2}} | 3.1017146973844056 {\displaystyle 3.1017146973844056} | 5.16964 × 10 − 5 {\displaystyle 5.16964\times 10^{-5}} |

| 10 − 3 {\displaystyle 10^{-3}} | 3.1017658768117684 {\displaystyle 3.1017658768117684} | 5.17024 × 10 − 7 {\displaystyle 5.17024\times 10^{-7}} |

| 10 − 4 {\displaystyle 10^{-4}} | 3.1017663770782633 {\displaystyle 3.1017663770782633} | 1.67577 × 10 − 8 {\displaystyle 1.67577\times 10^{-8}} |

Arbitrary Derivatives

Using Newton's difference quotient, f ′ ( x ) = lim h → 0 f ( x + h ) − f ( x ) h {\displaystyle f'(x)=\lim _{h\to 0}{\frac {f(x+h)-f(x)}{h}}} the following can be shown (for n > 0): f ( n ) ( x ) = lim h → 0 1 h n ∑ k = 0 n ( − 1 ) n − k ( n k ) f ( x + k h ) {\displaystyle f^{(n)}(x)=\lim _{h\to 0}{\frac {1}{h^{n}}}\sum _{k=0}^{n}(-1)^{n-k}{\binom {n}{k}}f(x+kh)}

Complex-variable methods

The classical finite-difference approximations for numerical differentiation are ill-conditioned. However, if f {\displaystyle f} is a holomorphic function, real-valued on the real line, which can be evaluated at points in the complex plane near x {\displaystyle x}, then there are stable methods. For example, the first derivative can be calculated by the complex-step derivative formula: f ′ ( x ) = ℑ ( f ( x + i h ) ) h + O ( h 2 ) , i 2 := − 1. {\displaystyle f'(x)={\frac {\Im (f(x+\mathrm {i} h))}{h}}+O(h^{2}),\quad \mathrm {i^{2}} :=-1.}

The recommended step size to obtain accurate derivatives for a range of conditions is h = 10 − 200 {\displaystyle h=10^{-200}}. This formula can be obtained by Taylor series expansion: f ( x + i h ) = f ( x ) + i h f ′ ( x ) − 1 2 ! h 2 f ″ ( x ) − i 3 ! h 3 f ( 3 ) ( x ) + ⋯ . {\displaystyle f(x+\mathrm {i} h)=f(x)+\mathrm {i} hf'(x)-{\tfrac {1}{2!}}h^{2}f''(x)-{\tfrac {\mathrm {i} }{3!}}h^{3}f^{(3)}(x)+\cdots .}

The complex-step derivative formula is only valid for calculating first-order derivatives. A generalization of the above for calculating derivatives of any order employs multicomplex numbers, resulting in multicomplex derivatives. f ( n ) ( x ) ≈ C n 2 − 1 ( n ) ( f ( x + i ( 1 ) h + ⋯ + i ( n ) h ) ) h n {\displaystyle f^{(n)}(x)\approx {\frac {{\mathcal {C}}_{n^{2}-1}^{(n)}(f(x+\mathrm {i} ^{(1)}h+\cdots +\mathrm {i} ^{(n)}h))}{h^{n}}}} where the i ( k ) {\displaystyle \mathrm {i} ^{(k)}} denote the multicomplex imaginary units; i ( 1 ) ≡ i {\displaystyle \mathrm {i} ^{(1)}\equiv \mathrm {i} }. The C k ( n ) {\displaystyle {\mathcal {C}}_{k}^{(n)}} operator extracts the k {\displaystyle k}th component of a multicomplex number of level n {\displaystyle n}, e.g., C 0 ( n ) {\displaystyle {\mathcal {C}}_{0}^{(n)}} extracts the real component and C n 2 − 1 ( n ) {\displaystyle {\mathcal {C}}_{n^{2}-1}^{(n)}} extracts the last, “most imaginary” component. The method can be applied to mixed derivatives, e.g. for a second-order derivative ∂ 2 f ( x , y ) ∂ x ∂ y ≈ C 3 ( 2 ) ( f ( x + i ( 1 ) h , y + i ( 2 ) h ) ) h 2 {\displaystyle {\frac {\partial ^{2}f(x,y)}{\partial x\,\partial y}}\approx {\frac {{\mathcal {C}}_{3}^{(2)}(f(x+\mathrm {i} ^{(1)}h,y+\mathrm {i} ^{(2)}h))}{h^{2}}}}

A C++ implementation of multicomplex arithmetics is available.

In general, derivatives of any order can be calculated using Cauchy's integral formula: f ( n ) ( a ) = n ! 2 π i ∮ γ f ( z ) ( z − a ) n + 1 d z , {\displaystyle f^{(n)}(a)={\frac {n!}{2\pi i}}\oint _{\gamma }{\frac {f(z)}{(z-a)^{n+1}}}\,\mathrm {d} z,} where the integration is done numerically.

Using complex variables for numerical differentiation was started by Lyness and Moler in 1967. Their algorithm is applicable to higher-order derivatives.

A method based on numerical inversion of a complex Laplace transform was developed by Abate and Dubner. An algorithm that can be used without requiring knowledge about the method or the character of the function was developed by Fornberg.

Differential quadrature

Differential quadrature is the approximation of derivatives by using weighted sums of function values. Differential quadrature is of practical interest because its allows one to compute derivatives from noisy data. The name is in analogy with quadrature, meaning numerical integration, where weighted sums are used in methods such as Simpson's rule or the trapezoidal rule. There are various methods for determining the weight coefficients, for example, the Savitzky–Golay filter. Differential quadrature is used to solve partial differential equations. There are further methods for computing derivatives from noisy data.

See also

- Automatic differentiation – Numerical calculations carrying along derivatives

- Five-point stencil – Point and its four nearest neighbors

- List of numerical-analysis software

- Numerical integration – Methods of calculating definite integrals

- Numerical methods for ordinary differential equations – Methods used to find numerical solutions of ordinary differential equations

- Savitzky–Golay filter – Algorithm to smooth data points

External links

- from wolfram.com

- by Nicholas Higham, SIAM News.